In this section, FMERA for priority-based and content-centric routing in IoT is explained. By providing an efficient routing solution, FMERA will minimize the rate of packet loss and end-to-end delay in IoT and at the same time will prevent the energy loss of nodes in the routing process. In this method, a network environment based on the structure of IoT with a limited movement area of nodes is considered, in which each node has a unique and unchanged id. The assumptions considered for this network are:

-

All nodes are aware of their positions and can inform other network components about their approximate location. This feature can be achieved by using GPS technology or localization based on the estimation of received signal strength from nearby nodes.

-

The position of network nodes is randomly determined. The distribution of this placement is uniform and as a result, the density of network nodes in different places is assumed to be almost the same.

-

Each node’s properties, such its beginning energy and maximal radio span, are regarded as distinct from those of other nodes because of the heterogeneity of the IoT network topology.

-

Every connected object in the IoT system may examine the contents of the messages in its buffer storage. In this manner, a station can distinguish the type of information being transmitted and notify to other units.

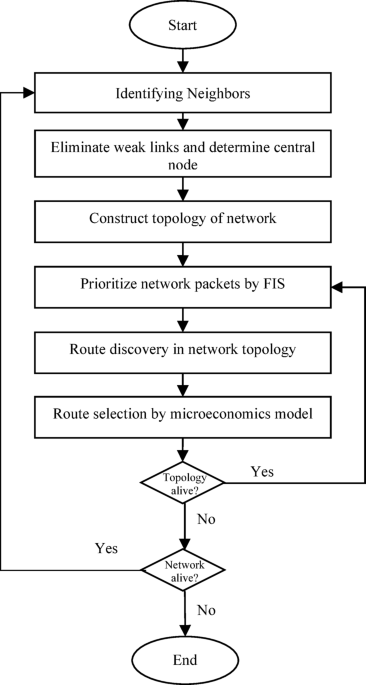

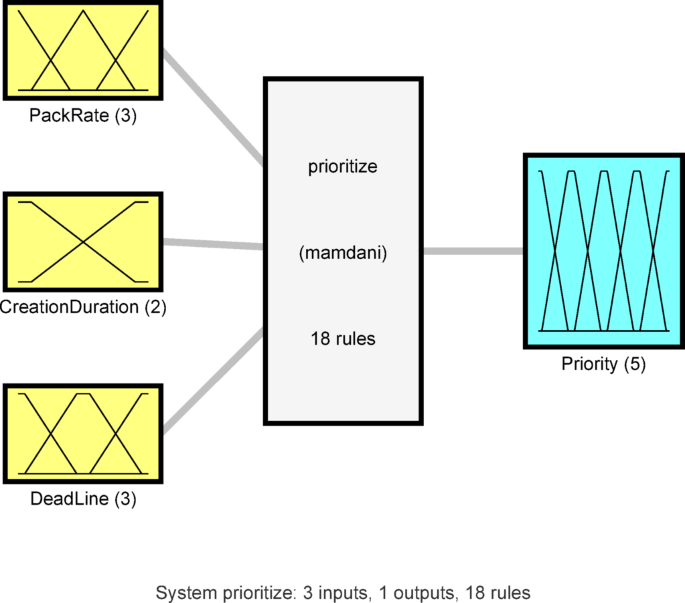

FMERA is a routing algorithm based on content and data priority using fuzzy logic and microeconomic theory (Fig. 1). For this purpose, a fuzzy inference system is deployed in every active thing in the network, and the purpose of this system is to prioritize the packets in the buffer memory of the node. The proposed fuzzy model determines the data priority based on the characteristics of the node and the data packet. In this way, this fuzzy model receives characteristics such as PR by the source node, CD, and deadline as input, and specifies the packet sending priority using its fuzzy rule base. In general, these rules are defined in such a way that while reducing the final delay (by checking the PR and deadline), it is possible to assign a higher sending priority to data sent from sources with a high PR. In this way, while reducing the delay, it is possible to avoid increasing the possibility of congestion in the network.

Separation of prioritization and routing steps can be effective in improving the response time of intermediate nodes and reducing packet overhead. Because in FMERA, data prioritization is based only on the local information of the node and the fuzzy model deployed in it, and the prioritization can be done as soon as the packet is placed in the buffer memory of the node. In addition, a model based on the microeconomics theory is used to determine the routes of sending data based on the determined priority as well as the content of the data. In this model, the participating nodes in each type of data routing are determined in such a way that they can act more effectively in data routing. FMERA includes three main phases (Fig. 1):

-

1.

Topology control

-

2.

Prioritizing packets based on fuzzy logic

-

3.

Data routing based on microeconomic theory

In the following, each of these steps are explained.

Topology control

The first step in FMERA is to form and control the network topology. The purpose of the topology control step is to discover the routes between each node and other network nodes. In FMERA, these routes are defined in the form of a hierarchical structure. However, the weak links in the network architecture must be identified before the network topological layout is formed. Some of the connectivity among objects may be one-way because of the variability of IoT network architecture. Natural factors like background noise or intrinsic factors like the imbalance of an object’s radio range could be the cause of this incident. One-way link can either be ignored in this case or transformed into two-way link with the help of an intermediary node. In the suggested strategy, one-way link is eliminated because the other option may result in higher consumption of energy and overhead for communication in the network’s infrastructure. In this manner, all one-way links are found and dismissed before building the topology of the network. The procedure of building the topology starts with choosing the main node, which is the node with the most neighbors in the entire network. To find the central component, every node transmits a control message to its peers with the number of its active links; if the node sending the packet has more links than its connections, each node that receives the packet will rebroadcast the message. Every node in the entire network will receive the ID of the station with the most active links once this procedure is repeated, and this node will be regarded as the structure center. When there is a tie, two or more nodes share the same maximum number of neighbors, the node with the lowest unique ID is chosen as the central node in order to guarantee a deterministic result. It is necessary to mention that this topology control stage is not a one-time affair. In order to accommodate node mobility and possible link failures, it is started when a network is started and restarted periodically, with the period a configurable network parameter, and when a node notices a large loss of neighbors, which is a major topological change. Following this procedure, the core node begins topology creation.

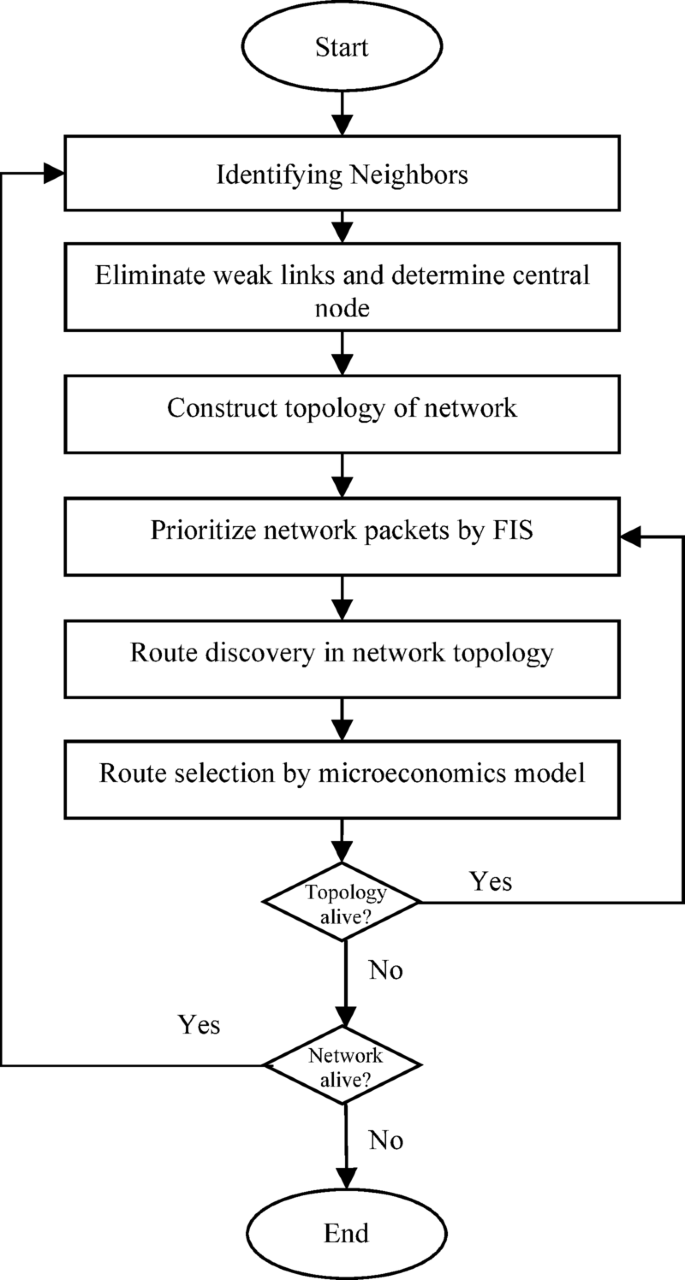

The main node initiates the topology control phase by transmitting a packet to every node in its immediate vicinity. Any node that obtains this packet—assuming it hasn’t already—pushes the address it received to the stack and rebroadcasts information to its peers. A stack structure is included in this packet to follow the broadcast trail. It is very important to note that this is a distributed process. The central node simply triggers the broadcast; then each node constructs and maintains its own local hierarchical view of the network independently of the messages it receives. By receiving the broadcast packet, a node updates its local tree structure. The node can use the stack in the packet it receives to identify the route the message took, creating parent–child links to create a local hierarchy. This prevents the scalability problems and single point of failure of one centrally-computed tree. Figure 2 shows an example of the formation of a hierarchical structure for the topology phase in the hypothetical node F.

An example of the topology control phase beginning by the central node (a) message rebroadcast process starting from node A, (b) hierarchical structure formed for node F.

In Fig. 2a, node A has started the topology formation process. The hierarchical structure formed for node F is shown in Fig. 2b. The root node’s maximum degree is thought to be 5 in order to better regulate the topology, avoid competition and disruption, and create an adequate number of potential routes. Each node initially modifies the weight of its local hierarchy tree connections before routing data packets to their destination.

Each node changes the weight of links one step advance in its local structure of hierarchy before sending an information packet to its destination. In a situation where there are several routes to transfer data to the destination, the microeconomic model in FMERA will use this information to choose the optimal route. The graph’s links are weighted by the following measures:

-

1)

Residual energy

-

2)

Latency

-

3)

The ratio of successfully received packets

The procedure for figuring out the weight of a link that leads to an unit like i is described below. The equation \(Energ{y}_{i}=\frac{{e}_{i}}{{W}_{i}}\) is used for normalizing the energy of node i. Here \({W}_{i}\) is the node’s starting power in joules and \({e}_{i}\) is the present power. Because it lowers the likelihood of early node loss of power and routing process disturbance, a node with higher levels of energy is preferred.

The amount of time that passes from sending information and receiving transit acknowledgement marks is known as node’s latency. Therefore, by calculating the mean period between delivering the message and receiving the confirmation, each active node can determine its transmission latency with other nodes. In other words, the delay metric is determined by looking at the communication records of objects. The equation \(Dela{y}_{i}=\left(\sum_{j=1}^{{N}_{p}}\frac{{t}_{j}}{{N}_{p}}\right)\) is used to determine the delay of a node such as i, where \({N}_{p}\) is the number of packets that have been sent to node i in the past, and \({t}_{j}\) is the delay of the j-th packet sent. Although this metric is an approximation, the mean round-trip time (RTT) of packets sent successfully that have been acknowledged is a convenient, and lightweight, heuristic measure of link quality. This non-invasive measurement methodology does not incur the high communication cost involved in active probing methods (e.g., transmitting special echo packets) and offers a dynamic, localized performance measurement of the link that is useful in routing decisions.

The proportion of successfully transmitting packets is the final criterion used to assess a node. The number of successfully dispatched messages (\({t}_{i}\)) divided by the total amount of messages obtained by this node (\({r}_{i}\)) is the amount of successfully delivered messages in node i. \(Deliver{y}_{i}=\frac{{t}_{i}}{{r}_{i}}\) is one way to represent this ratio. This criterion displays the likelihood that node I will be able to transmit the flowing message to the following stage. Each node determines the weight of the link leading to node I using the aforementioned criteria in the manner described through Eq. (1):

$$P_{i} = \frac{{Energy_{i} \times Delivery_{i} }}{{Delay_{i} }}$$

(1)

The value of \({P}_{i}\) in Eq. (1) specifies the value of establishing connection with node i, and the microeconomic model in the proposed method uses this criterion as one of the path selection criteria. Each downstream node piggybacks the energy level and the successful routing rate of the related packet on the ACK packets to be received by the upstream nodes. It should be noted that communication overhead is incurred by the control packets that are exchanged in this topology control phase. This overhead is necessary to keep routing information in a dynamic network up-to-date, and it has been included in the overall energy consumption estimates in our simulation results to allow a fair and complete performance assessment. In the second step of FMERA, prioritization of the data packets is performed when the data packet is stored in the buffer memory of the node.

Packet prioritizing by fuzzy logic

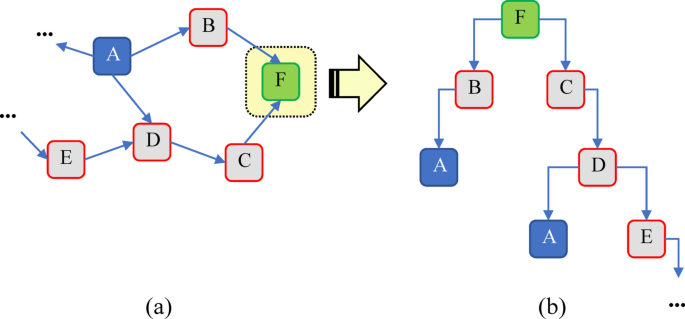

The packet prioritization is a local choice of each node regarding the data that is in its own buffer. This design guarantees scalability and decentralized and fast decision-making with no need to know the global network state. The priority value computed in this step is subsequently employed as an important input in the distributed routing step (outlined in Section “Content-centric routing using microeconomic theory”), which identifies the optimal next-hop of the now-prioritized packet in the known network topology. In FMERA, a fuzzy model is used to determine the priority of packets located in the memory of each node. This fuzzy model performs prioritization by using the information of the source node and packet characteristics. In this way, every active node in the network is equipped with a fuzzy inference model to determine the priority of each packet in the node’s buffer memory for sending. The structure of this fuzzy model is shown in Fig. 3.

The proposed fuzzy model for determining the priority of data packets.

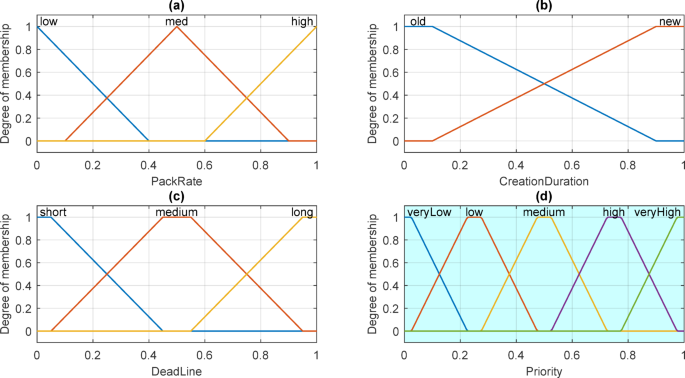

A Mamdani fuzzy framework29 with 3 variables for input, one output variable, and eighteen fuzzy rules is the fuzzy model utilized as the objective function, as shown in Fig. 3. Figure 4 shows the membership functions for the input and output variables for this fuzzy structure.

Membership functions of fuzzy variables in FMERA.

In Fig. 4a, the membership functions of the input variable PR by the source node are displayed. Figure 4b and c also depict the membership functions of the input variables CD and deadline, respectively. Finally, the output fuzzy variable of the packet priority with 5 membership functions is given in Fig. 4d. In the following, it is explained how to calculate each of these variables.

This fuzzy system’s initial input, which has 3 membership functions—”low,” “medium,” and “high”—describes PR by the source node. Equation (2) is used in the suggested fuzzy model to define PR by the source node:

$$I_{1} = \frac{{R_{s} }}{{\frac{1}{N}\sum\nolimits_{{i = 1}}^{N} {R_{i} } }}$$

(2)

In Eq. (2), \({R}_{i}\) represents the ratio of the packet generation in node i and \({R}_{s}\) is the packet generation ratio by the source node. This variable is directly normalized by the division of the packet rate of the source node by the average packet rate of the entire network to give a relative result of traffic generation.

The CD is the second fuzzy input variable in the suggested model. Additionally, this input is defined using Eq. (3) and has two membership functions, “old” and “new”:

$$I_{2} = \frac{{T – T_{p} }}{D}$$

(3)

\({T}_{p}\) is the time to produce the current packet and \(T\) is the time. \({D}_{p}\) also shows the time that the packet is expired. This is naturally normalized by the total valid lifetime of the packet, \({D}_{p}\), giving a value that is the fraction of lifetime that has elapsed. Lastly, utilizing Eq. (4), the packet delivery constraint with three membership functions “short”, “medium”, and “long” is the third input fuzzy variable in the suggested fuzzy framework.

$$I_{3} = 1 – \frac{T}{D}$$

(4)

Table 1 lists the principles utilized in the fuzzy system for prioritizing packets of data. The fuzzy system’s output is a fuzzy variable that indicates the priority of each data packet using the fuzzy variables described. The proposed fuzzy model determines the priority of information packets to maximize the probability of successful packet delivery.

The reasoning of the adoption of these rules is to establish a balanced scheme of prioritization. To illustrate, a packet with a source whose Packet Rate is high, but is also old (large creation duration) and whose Deadline is small is given a veryHigh priority (Rules 7, 13, 14). This is an important rule to avoid high-traffic sources causing a buffer overflow and at the same time to avoid dropping urgent packets that are aging. A new packet of a low-rate source with a large Deadline, on the other hand, is assigned a veryLow priority (Rule 6), because it is neither urgent nor capable of causing congestion. This smart mapping guarantees that network resources are assigned to the most urgent packets, and thus the maximum delivery ratio of valuable data is achieved and the deadlines of applications are observed.

After prioritizing the packets in the buffer memory of each node, data routing is done based on the prioritization determined in the fuzzy model.

Content-centric routing using microeconomic theory

FMERA uses a model that is based on microeconomic theory in order to route prioritized packets. It is important to define that the microeconomic routing model is implemented in a fully distributed way. This is opposed to a centralized approach where each forwarding node in a path operates this decision-making process independently. Each node, whenever it has a prioritized packet to transmit and there are more than one next-hop options in its local topology, implements the microeconomic model presented herein to choose the best path. This guarantees routing decisions to be adaptive, scalable and hop-by-hop made across the network.

This model receives the information related to the weight of the path steps, packet priority, and data processing capability as input, and based on this information, determines the optimal path for sending data. It should be noted that this decision-making model will be used if more than one path is available to transfer the data packet to the destination. According to these explanations and the materials mentioned in the previous section, the proposed microeconomic model uses three categories of information to route each data packet:

-

1.

Path weight (g1): refers to the sum of the weights calculated in the topology control phase for connections in each path. This weight value is calculated using Eq. (1) and based on the criteria of energy, delay, and successful delivery rate of the route.

-

2.

Packet priority (g2): Packet priority criterion is determined through the fuzzy model in the node’s memory and based on the contents described in the previous section.

-

3.

Data processing capability (g3): This criterion shows whether the node can process the data type of the current packet or not. If the node can process a packet data type (and integrate it with data of the same type), this variable value is \({g}_{3}=1\), otherwise \({g}_{3}=0\).

Using the above criteria, an evaluation vector is formed for each ready-to-send packet like \(R={\left[{g}_{1},{g}_{2},{g}_{3}\right]}^{T}\). The significance of each of these factors may vary depending on how the routing algorithm is applied in the particular environment. As a result, each of these variables is assigned a weight value, which is then expressed as a vector like \(W=\left[{w}_{1},{w}_{2},{w}_{3}\right]\). The variables pertaining to path weight, packet priority, and data processing capability are represented by the values of w1, w2, and w3 in this vector, respectively. Based on the weights established in W, the score of each path for choosing in the routing procedure can be computed through combining both of these vectors through Eq. (5):

$$\omega =W\times R$$

(5)

In Eq. (5), the higher value of ω indicates the higher value of a path in the routing process. In the following, using these path evaluation criteria and based on microeconomic theory, it is tried to select the most suitable path based on the characteristics of the data and allocate it for sending. This method considers the network and the node as two players in the microeconomic model. In this case, the network has two game strategies, s1 and s2, which indicate the assignment or non- assignment of the current path to the node, respectively. Also, the node has two game strategies in the form of t1 and t2, which respectively indicate the use or non-use of the designated path for sending data by the node. With these explanations, game matrices for nodes and networks can be defined by Eqs. (6) and (7):

$$N = \left[ {\begin{array}{*{20}c} {n_{{11}} } & {n_{{12}} } \\ {n_{{21}} } & {n_{{22}} } \\ \end{array} } \right]$$

(6)

$$U=\left[\begin{array}{cc}{u}_{11}& {u}_{12}\\ {u}_{21}& {u}_{22}\end{array}\right]$$

(7)

The game strategy for the network is represented by the rows of the two matrices previously mentioned, and the game plan for the node is represented by the columns of both matrices. The network’s route assignment in the si and tj strategies is represented by the nij component of the N matrix. Additionally, the node’s path selection in the si and tj strategies is indicated by the uij component in the U matrix.

After estimating the score for every path utilizing Eq. (5), the amount of \(\omega\) is contrasted with a predefined fixed threshold \({\omega }_{0}\). Threshold value \({\omega }_{0}\) is calculated based on testing and conditions of applying the routing method in the network setting. In this scenario, if \({\omega >\omega }_{0}\), it is apparent that the features of the present path are exceeding the predicted features for the node. If \({\omega =\omega }_{0}\), in this situation the features of the path precisely match the qualities demanded by the node, and conversely if \({\omega <\omega }_{0}\), the features of the path cannot fulfill the requirements of the node. Equations (8) and (9) are used to determine the network and node indices based on these ideas:

$$N=\left[\begin{array}{cc}\frac{c\times \omega }{{\omega }_{0}}-c& \frac{c\times \omega }{{\omega }_{0}}-c\\ -\mu \left(\frac{c\times \omega }{{\omega }_{0}}-c\right)& -\left(\frac{c\times \omega }{{\omega }_{0}}-c\right)\end{array}\right]$$

(8)

$$U=\left[\begin{array}{cc}\frac{\omega }{c\times {\omega }_{0}}-\frac{1}{c}& -\mu \left(\frac{\omega }{c\times {\omega }_{0}}-\frac{1}{c}\right)\\ \frac{\omega }{c\times {\omega }_{0}}-\frac{1}{c}& -\left(\frac{\omega }{c\times {\omega }_{0}}-\frac{1}{c}\right)\end{array}\right]$$

(9)

In the above relations, c represents the cost of using the current path. In the matrix N, the value of \(\frac{c\times \omega }{{\omega }_{0}}\) indicates the virtual utility of the network from the current path and c specifies the real utility of the network from this path. If the network refuses the assignment of this route to the node, the values that are negative in the second row of this matrix indicate the amount of network resources that will be squandered. Additionally, the penalty factor is indicated by the μ element; if it is greater than one, it implies that denying the node’s demand to use this path will have a negative impact on both the node making the request now and any future nodes that may request the way. In the same way, the node’s virtual usefulness from the present route is indicated by the numerical value of \(\frac{\omega }{c\times {\omega }_{0}}\) in matrix U, whereas its real use of this route is indicated by \(\frac{1}{c}\). The μ parameter and the values that are negative in the second column of the U matrix are equivalent to the values that correspond in the N matrix. Based on the present game strategy, if both of the aforementioned matrices have negative elements, it indicates that the network’s or node’s requirements are not being met. Consequently, a route that has higher scores in the user and network matrices and has the greatest ω score is chosen for the node. After determining the route, the data will be sent.